Brute Forcing Keypoints: BoVW vs CNN

A comparative study benchmarking GPU-accelerated Bag-of-Visual-Words against CNNs on CIFAR-10/100. Scaling traditional BoVW to 50M keypoints via cuML matches shallow network performance.

Note: the PDF write-up is available here, and the project source is hosted at GitHub.

Brute Forcing Keypoints: BoVW vs CNN

GPU-Accelerated Classical Computer Vision vs Deep Learning for Image Classification

Abstract

Recent advancements in deep learning enable neural architectures that can automatically extract features as a natural by-product of their execution. For image classification, this is most notably apparent in convolutional neural networks, which have seen significant attention as GPU architecture has matured. However, the focus on neural representation learning has diverted attention away from classical techniques. This work poses the question: to what extent can modern GPU hardware paired with software libraries such as cuPy and cuML improve classical CV methods? We revisit Bag-of-Visual-Words (BoVW) for image classification, using cuML to scale codebook construction to 50 million keypoints on CIFAR-10. Our best BoVW configuration matches a modernised LeNet-5 variant for classification accuracy, but falls short of a more powerful, compute-intensive, VGG16 with batch normalisation. Ultimately, our results signal that while modern hardware enables previously impractical scaling for classical methods, the fundamental limitations of BoVW (particularly vector quantisation error and the absence of spatial hierarchy) remain when compared to deeper architectures. Future work could explore more sophisticated classical approaches. Source code and reproducible artefacts are made available at https://github.com/jonathondilworth/uom-vision.

Implementation

The codebase is structured around factory patterns for extensibility:

- Feature extraction:

LocalFeatureExtractorFactorysupporting Harris, Harris-Laplace, SIFT, and Dense SIFT - Clustering:

ClusteringAlgorithmFactorywrapping cuML and sklearn implementations - Classification:

ClassifierFactoryfor SVM, RandomForest, and kNN with GPU/CPU backends - Image transforms: Composable pipeline inspired by torchvision conventions

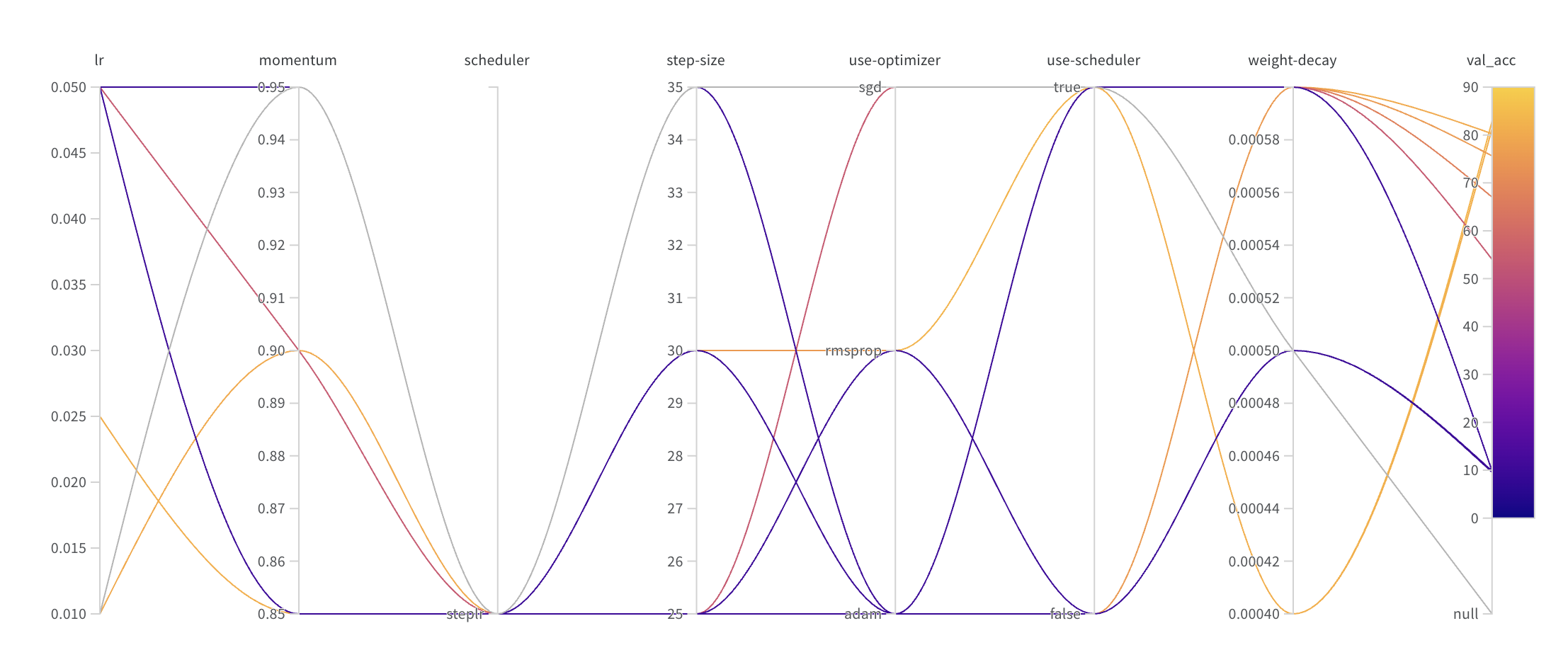

Experiments were distributed across two machines: a local workstation (RTX 4000 ADA, 20GB VRAM) and a DigitalOcean cloud instance (H100, 80GB). The H100 was essential for scaling to 50M keypoints—the RTX 4000 exhausted memory on CIFAR-100. Approximately 2,900 BoVW configurations were evaluated through shell-scripted grid searches, while CNN hyperparameters were tuned via wandb sweeps.

Key Results

CIFAR-10

| Method | Test Accuracy |

|---|---|

| BoVW (Harris-SIFT, Hellinger-L2, k=4096) | 65.46% |

| BoVW (Harris-SIFT, RBF SVM, k=4096) | 64.83% |

| M-LeNet-5-D (200 epochs, CyclicLR) | 64.58% |

| VGG-16-BN (10 epochs, StepLR) | 83.90% |

CIFAR-100

| Method | Test Accuracy |

|---|---|

| BoVW | Failed (memory exhausted) |

| M-LeNet-5-D (200 epochs) | 28.71% |

| VGG-16-BN (10 epochs) | 59.78% |

Summary

Coursework for the University of Manchester Robotics & Computer Vision module, completed over four weeks. The project implements a complete BoVW pipeline with GPU acceleration via cuML/RAPIDS, supporting multiple keypoint detectors (Harris, Harris-Laplace, SIFT), histogram encodings (L2, TF-IDF, Hellinger), and classifiers. CNN baselines include a modernised LeNet-5 variant with dropout and VGG-16 with batch normalisation, trained using PyTorch with wandb experiment tracking.